Web Scraping with JavaScript Using Gaffа

Learn how to scrape the web in JavaScript using Gaffa's REST API with no browser setup or third-party libraries.

Apr 14, 2026

Web scraping sounds simple until you're buried in headless browser config, anti-bot workarounds, and proxy management, before you've written a single line of business logic. Gaffa's REST API approach means you can scrape the web using any language capable of sending an HTTP request, JavaScript, Python, Ruby, or Go, with no third-party scraping libraries, no browser configuration, and no infrastructure to maintain.

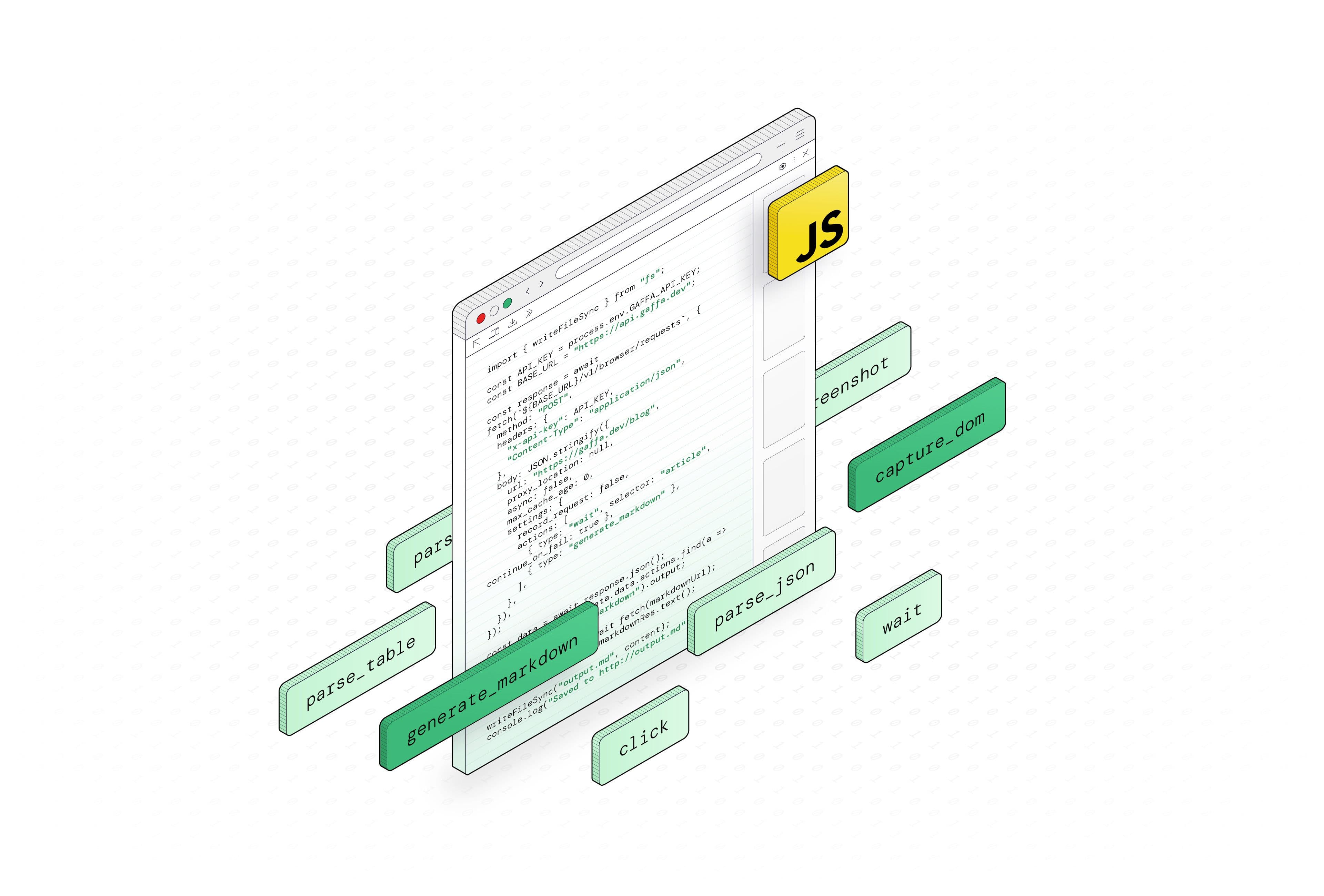

In this post, we'll walk through how to get started with web scraping in JavaScript using Gaffa, covering two of its most useful actions:

In this post, we'll walk through how to get started with web scraping in JavaScript using Gaffa, covering two of its most useful actions:

- generate_markdown for extracting readable content and

- capture_dom for pulling structured data.

Getting started

You'll need a Gaffa API key. Sign up at gaffa.dev and create your key from the API Keys section of your dashboard. Once you have it, every request you make to the Gaffa API should include it as an X-API-Key header.

The examples in this post run in Node.js. If you don't have it installed, grab it from nodejs.org. Any recent version will do.

Before running, make sure your API key is available as an environment variable:

Next, save the example code as a .js file (for example, script.js).

Then run it from your terminal:

The examples use top-level await, which is supported in Node.js 14.8 and above. If you run into issues, either:

- Add "type": "module" to your package.json, or

- Rename your file to .mjs

All requests are sent as JSON to the /v1/browser/requests endpoint. The basic structure of a request looks like this:

Basic Request Structure

The actions array is where the real work happens. You specify exactly what you want done and in what order, and Gaffa carries them out sequentially on a real cloud browser. If you want to wait for content to load, click a button, and then capture the resulting DOM, simply list these three actions in sequence. Similarly, for scrolling an infinite feed before generating markdown, follow the same approach. Each action performs a specific task, and together they define the full automation process. You can find the complete list of available actions in the Gaffa documentation.

Example 1: Extracting page content with generate_markdown

If you want to pull the readable content from a page, such as an article, a product description, or a blog post, the generate_markdown action is the cleanest way to do it. It strips away navigation bars, ads, scripts, and other noise, and returns the core content as a clean markdown file.

This is especially useful if you're feeding the output into an LLM. We cover this in more detail in Convert any webpage to LLM-ready Markdown using Gaffa.

Here's a complete script that fetches a page, converts it to markdown, and saves it as a .md file on your machine:

Here's a complete script that fetches a page, converts it to markdown, and saves it as a .md file on your machine:

Extracting Page Content with generate_markdown

The wait action tells Gaffa to hold off until an article element appears in the DOM before proceeding. This is important for pages where content loads dynamically after the initial HTML. Without it, you might capture a loading spinner rather than the actual content.

Once the request completes, the script fetches the markdown content and saves it as output.md in the same folder as the script. You'll see Saved to output.md in the terminal when it's done.

Example 2: Extracting raw HTML with capture_dom

When you need more control over the data you extract, you can use the capture_dom action. For example, it helps with pulling specific elements, scraping product listings, or parsing structured content, since it gives you the full HTML of the rendered page.

Here's a complete script that captures the DOM of Wikipedia's Artificial Intelligence article and saves it as an output.html file you can open directly in your browser:

Extracting Raw HTML with capture_dom

Once the script runs, open output.html in your browser and you'll see the fully rendered page, exactly as Gaffa captured it. From here, you can parse it locally using a library like node-html-parser or cheerio to extract specific elements like tables, headings, links, or any other structured content you need.

This approach gives you full flexibility. Whether you need to clean up values, handle merged cells, skip header rows, or transform data before saving it, you have complete control over the parsing step.

A few other things worth knowing

- Dynamic content: If a page loads content after the initial HTML (common on JS-heavy sites), use the wait action to pause until a specific element appears before proceeding.

- Geo-restricted sites: For sites that block access based on location, add proxy_location: "us" (or another supported region) as a top-level parameter in your request body to route the request through the appropriate IP address. See the supported regions.

- Async mode: For slow-loading pages or when processing multiple URLs at once, you can set "async": true in your request. Instead of waiting for the result, Gaffa returns a request ID immediately and processes the job in the background. You then poll for the result when you're ready. You can read more about how it works in the Gaffa docs.

What else can you do?

Beyond content extraction and DOM capture, Gaffa supports additional actions for more complex scraping tasks:

- parse_table: Finds a table on the page and returns its contents as a clean JSON array, with headers automatically normalized into object keys. No HTML parsing needed.

- parse_json: Uses an AI model to extract structured data from a page according to a schema you define. Rather than returning raw HTML for you to process, it reads the page and returns a clean JSON object shaped exactly to your specification. This is useful for pages with complex or inconsistent layouts where a CSS selector approach would be brittle.

- capture_screenshot: Takes a full-page screenshot. Handy for debugging or visual monitoring.

- Page interactions: Gaffa also supports a set of interaction actions, such as clicking elements, typing into fields, and scrolling (including infinite scroll), that let you manipulate the page before capturing data. These are useful for handling cookie banners, login flows, search forms, or loading more content before extraction. You can also use print to export the full page as a PDF.

You can mix and match these in a single request. For example, you might wait for content to load, click a button to expand a section, then capture_dom to grab the result.

Don't want to write code yet?

If you want to test your actions before wiring them up in JavaScript, the Gaffa API Playground lets you run requests directly in your browser with no code required. Just paste in your JSON payload, hit Send, and see the output immediately. It's a great way to verify your selectors and ensure the data looks correct before integrating it into your application.

The Gaffa API Playground

Web scraping in JavaScript doesn't need to mean managing headless Chrome, wiring up Puppeteer or Playwright, dealing with proxies, or fighting anti-bot measures. With Gaffa, you send a POST request and get your data back in any language, from any stack. Sign up at gaffa.dev, grab your API key, and give it a try in the playground.

Further reading:

Ready to build your own integration?

Join hundreds of teams using Gaffa to automate their browser workflows