How to Scrape a Table with Python (The Easy Way)

Learn how to extract table data from any website using Python and Gaffa — no browser setup, no boilerplate, just clean structured data in a few lines of code.

Apr 07, 2026

Web tables are goldmines of structured data, interest rate histories, sports standings, financial reports, and product comparisons. The problem is getting that data out cleanly. In this post, we'll walk through two ways to scrape a table using Python and Gaffa.

- Manually, using capture_dom and BeautifulSoup

- Automatically, using Gaffa's parse_table action.

You can find the full code for this post in our Python examples repository on GitHub.

The problem with traditional table scraping

Most tutorials recommend using Playwright or Selenium to load a page and retrieve the HTML. That works, but it means you're managing browser infrastructure yourself, dealing with headless Chrome setups, handling anti-bot detection, rotating proxies, and writing a non-trivial amount of boilerplate just to get to the data.

Gaffa handles all of that complexity for you. You send a POST request, and Gaffa spins up a real browser, handles anti-bot measures, optionally routes through proxies, and returns exactly what you ask for, no browser setup on your end.

We'll demonstrate two approaches to scraping tables using two different sites.

Getting Started

You'll need a Gaffa API key. Sign up at gaffa.dev and create your key in the API Keys section of the dashboard. For setup instructions, see the README in our GitHub samples repo.

Approach 1: Capture DOM + BeautifulSoup

The capture_dom approach gives you maximum control. Gaffa fetches the page and returns the raw HTML DOM. You then parse it locally with BeautifulSoup and extract the table data yourself.

Step 1: Fetch the DOM with Gaffa

Capturing a page DOM with Gaffa

The wait action tells Gaffa to wait until a table element appears in the DOM, which is useful for pages where the table loads dynamically. The capture_dom action then returns the full HTML as a file URL, which we fetch separately.

Step 2: Parse the table with BeautifulSoup

Parsing a Table from the DOM using BeautifulSoup

Step 3: Run it and save the output

Saving the parsed table

Sample output:

Sample parsed table output

See the full sample output on GitHub.

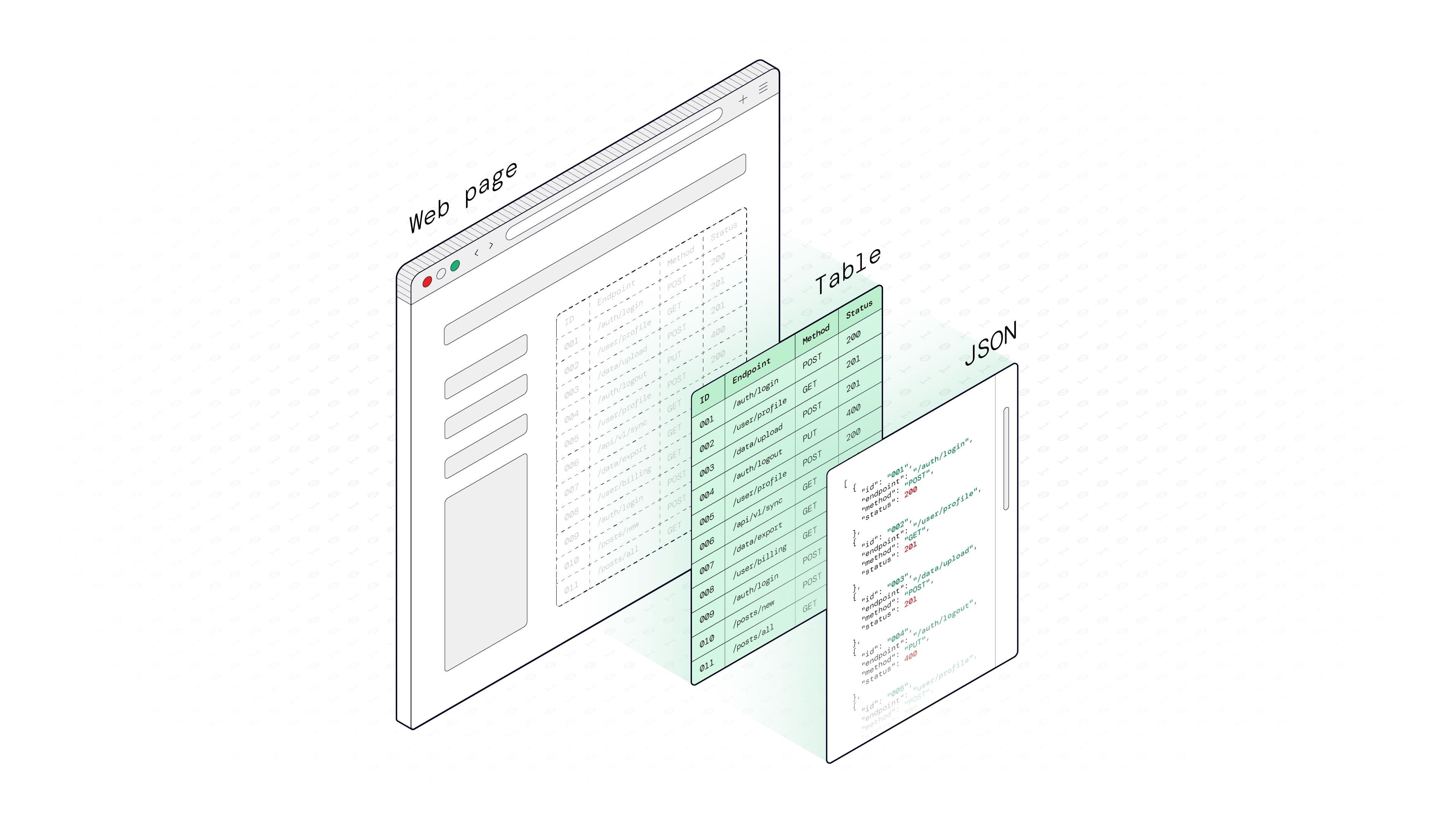

Gaffa's demo table and the JSON output

This approach is flexible. If you need to clean up values, handle merged cells, or do any custom transformation before saving, BeautifulSoup gives you full control over the parsed data.

Approach 2: Using the parse_table action to get JSON with no processing

If you just want the data and don't need custom processing, Gaffa's parse_table action eliminates the need for the BeautifulSoup step entirely. It finds the table on the page, reads the headers, and returns a ready-to-use JSON object directly.

Here's what the action does internally:

- It locates the table using your CSS selector

- Converts the header row into property names (lowercased, non-alphanumeric characters replaced with underscores)

- Then maps each cell value to its corresponding header for every row, returning a clean JSON array with no post-processing required on your end.

Using parse_table on the Demo Site

The Gaffa demo site at demo.gaffa.dev is a simple test environment with pre-built pages designed for trying out Gaffa actions before pointing them at a real site

Using Gaffa's parse_table action

That's the entire script: no HTML parsing, no BeautifulSoup, no column mapping. The output is already shaped as a list of objects you can use immediately.

Real-World Example

Let's apply parse_table to Wikipedia's List of Countries by GDP (Nominal), a clean, publicly accessible table of financial data. Wikipedia uses a consistent CSS class on all its data tables, making it straightforward and reliable to target with a selector.

Still using the same fetch_parsed_table function we wrote for the demo site, just swap the if __name__ block with this:

Wikipedia table parsing using Gaffa

Sample output:

Sample table data

See the full sample output on GitHub.

Notice how the original column headers, like “Country/Territory” and “IMF 2026”, are automatically normalised into “country_territory” and “imf_2026”. Spaces and special characters are replaced with underscores, and everything is lowercased, so the output is immediately usable without any cleanup.

No proxy_location is needed here, since Wikipedia is globally accessible, but for sites that restrict access by geography, you can simply add proxy_location="us" or another supported region to route the request through the appropriate IP address.

Wikipedia's GDP table scraped to JSON with parse_table.

Which Approach Should You Use?

Setup

More code

parse_table

MInimal code

Control

Full

parse_table

Limited to what Gaffa returns

Best for

Complex tables, complex logic

parse_table

Standard tables, fast extraction

Post-processing

Yes

parse_table

None needed

If the table is straightforward and you just need the data, use parse_table. If you need to do any custom processing, such as merging columns, skipping rows, or reformatting values, use capture_dom with BeautifulSoup.

Either way, you're not managing browser infrastructure, rotating proxies, or writing anti-bot workarounds. Gaffa handles that layer so your Python code stays focused on what matters: the data.

Don't Want to Write Code Yet? Use the Playground

The Gaffa API Playground.

If you want to test parse_table or capture_dom before writing any Python, the Gaffa Playground lets you run requests directly from your browser with no code and no setup.

Just paste in your JSON payload, hit Send Request, and see the output instantly. It's a great way to confirm your selector is working and the table is returning the right data before you wire it up in a script.

Web scraping tables in Python doesn't have to mean wrestling with browser setup, proxy rotation, or anti-bot headaches. Whether you want fine-grained control with capture_dom and BeautifulSoup, or a clean JSON result straight from parse_table, Gaffa gives you a straightforward path to the data. Sign up at gaffa.dev, grab your API key, and start with the playground to see it in action.